Data Movement

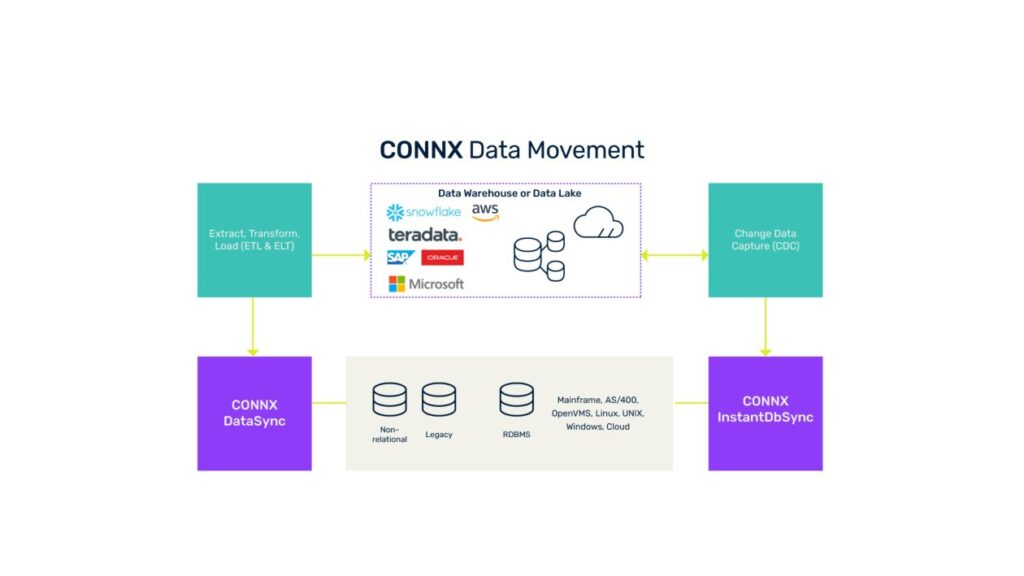

Move, replicate and synchronize your data from legacy systems to the cloud and everything in between to power your data-driven enterprise.

What is data movement?

Data movement is the ability to move data from one place in your organization to another through technologies that include extract, transformation, load (ETL), extract, load, transform (ELT), data replication and change data capture (CDC), primarily for the purposes of data migration and data warehousing.

Your organization’s IT infrastructure and application landscape are continuously changing, and your applications need data from a range of databases. This means you need efficient and secure data movement solutions to shift data across your systems without impacting the performance of your sources.

Data movement, data synchronization and data replication are complementary methods of data integration. Together, they enable you to deliver fresh data to keep your databases, data warehouse, big data and cloud systems current.

Types of data movement tools

Replication

Offers a dependable, low-impact method of creating an accurate and up-to-date copy of your single- or multiple-source data, which can be deployed to any person who needs access to it, from wherever and whenever they work. You can stay in control of replicated databases with flexible configurations of archiving and retention rules, data relocation and storage. Your data remains accessible through the original application or through any analytics tools you have in place.

Database Synchronization Techniques

Enables you to keep your replicated data fresh, so your users and applications are working from the best information you can give them. You can update replicated databases with either a batch-oriented (pull) or real-time (push) configuration. For many relational databases, you can synchronize new data instantly with a capability called change data capture.

Why move data?

Comprehensive data movement and transformation capabilities are essential to modernize and extend your IT portfolio. You may need data movement tools, for example, to move data from your legacy systems and platforms to cloud databases. ELT is a data integration process that combines data from multiple sources in a single data store. This capability is valuable to meet hybrid integration requirements, such as connecting and transforming legacy data sources to a data warehouse environment, or moving data from transactional databases to a big data or data lake environment.

Use cases include:

Database replication

Replicate a database for disaster recovery, faster analytics at multiple locations, and more efficient use of distributed resources.

Cloud data warehousing

Gain confidence that your data warehouse contains the most up-to-date information from across your organization, including from legacy databases and platforms, while minimizing the impact on the uptime and performance of the underlying systems. Move data to cloud databases, such as MySQL to Microsoft® Azure® SQL Database, Oracle to Amazon® AWS RDS, or SQL Server® to Amazon REDSHIFT®. Extend ETL capabilities to connect and transform legacy data sources to a data warehouse environment, such as OpenVMS® Rdb to Teradata®.

Hybrid data movement

Master data movement between on-premises systems and cloud services to balance innovation, performance and business continuity. Move on-premises data to the cloud to generate insights and benefit from on-demand elastic services. Move data from cloud applications to the mainframe for accurate, complete data in your system of record.

Cloud data lakes

Use a data movement solution to shift data from transactional databases, such as Adabas, VSAM or IMS, to a big data or data lake environment, such as Hadoop® , Snowflake®, AWS and Azure®.

Data archiving

Schedule archives of your core data to proactively manage the growth of your databases and keep your systems running at peak performance. Boost compliance with regulatory guidelines for data capture to enable traceability and future audits.

Benefits of data movement using CONNX

Integrate all your data sources

Move, replicate and synchronize data from a wide spectrum of databases (legacy, relational, big data and cloud) residing on platforms such as mainframe, OpenVMS, iseries (AS/400), UNIX®, Linux®, Windows® and the desktop. CONNX offers 150+ database adapters, the industry’s largest range, to deliver standard SQL connectivity. Connect to any ODBC, OLE DB, JDBC®, J2EE® or .NET® compliant application, report writer or development tool.

Move data seamlessly

Use the CONNX data movement solution to synchronize your cloud-based data warehouse with legacy and non-relational data sources such as Adabas, VSAMTM, IMSTM, Rdb and RMS in an easy, standardized way without disrupting operations.

Replicate in real time

Share event-driven data leveraging CDC technology. Capture, transform and replicate data from a selection of sources to a number of target data systems (such as databases, data warehouse, big data, cloud and digital platforms). Keep your data movement efficient by incrementally updating only the records that have changed.

Synchronize efficiently

Transformation settings give full control of scheduling incremental or full data movements, launching pre- or post-sync tasks, and setting up automatic email event notifications. You can scale your synchronization tasks based on demand, such as scheduling updates for low-use periods, or as frequently as every minute when your business requires more up-to-date information. For example, add CONNX to Microsoft® SSIS for advanced SQL Server® support to easily harvest changed data in the SQL Server database for efficient incremental updates.

Comprehensive transformation and ETL

SQL-based transformation with a graphical query builder allows comprehensive mappings of tables and fields. A library of more than 500 transformation functions provides many ways to map the value of a field, including abilities for complex transformations such as filter, sorting, joins, unions and aggregations.

Stay in control

CONNX data replication solutions put you in complete control with flexible configurations of archiving and retention rules, data relocation and storage. Your data remains accessible through the original application or through any analytics tools you have in place.

Secure access

CONNX Security is ideally suited to handle cross-platform security issues by managing multiple database logins and securing access to the data in each system.

Manage metadata

Create a lexicon that combines all your data into a single, comprehensible structure without altering the source structures.